AI Megathread

-

@Aria said in AI Megathread:

@Pavel said in AI Megathread:

@MisterBoring Either @Roz or @Aria explained… somewhere up in the higher reaches of this thread. I got a cramp trying to scroll that far.

I explained it here. Roz got mad that she didn’t know the dumb reason they’re called en dash and em dash.

it is admittedly a very dumb reason. It sounds like a reason that someone from Long Island would come up with

I have actually had to unlearn using em dashes because I would do it constantly. I use a lot of parentheses now when previously I would just be like – . People have recently assumed that I was using AI (not great for clinical writing) and thus everything is over-parenthized. Over-parenthesesed?

-

@somasatori said in AI Megathread:

I have actually had to unlearn using em dashes because I would do it constantly. I use a lot of parentheses now when previously I would just be like – . People have recently assumed that I was using AI (not great for clinical writing) and thus everything is over-parenthized. Over-parenthesesed?

Parenthosophized. Add it to the style guides now, please and thank you.

-

@Trashcan said in AI Megathread:

What would you consider an acceptable scale?

Honestly? More mediums. Media. Whichever. Essays, academic papers, hell even clinical notes. The kinds of writing that will really easily look like AI to anyone who doesn’t know what they’re talking about.

But ultimately, it doesn’t even matter if the tool is very nearly perfect. Many people in many settings, even professional ones, won’t run text through a detector, they’ll look at some shitty guide on the internet and declare something to be AI or not. It’s ultimately a human problem, not a detector problem – they’re going to believe what they want to believe and the detection software will be evidence for them either way: “The detector works perfectly without flaws or errors,” when it agrees with them, and “the detector is easily fooled and full of problems and my brain is better” when it disagrees.

Because we’ve still got stupid old people making stupid old people decisions based on metrics from stupid old people times, like the 70s.

@somasatori said in AI Megathread:

People have recently assumed that I was using AI (not great for clinical writing) and thus everything is over-parenthized. Over-parenthesesed?

I’ve started using semicolons more in my notes:

Client reported improved sleep this week — though still experiencing early-morning waking when stressed.

vs

Client reported improved sleep this week; still experiencing early-morning waking when stressed. -

@Pavel said in AI Megathread:

@somasatori said in AI Megathread:

People have recently assumed that I was using AI (not great for clinical writing) and thus everything is over-parenthized. Over-parenthesesed?

I’ve started using semicolons more in my notes:

Client reported improved sleep this week — though still experiencing early-morning waking when stressed.

vs

Client reported improved sleep this week; still experiencing early-morning waking when stressed.This is honestly a great idea. I’m really thankful for the suggestion!

-

If your rp isn’t boring and hollow, then it won’t ping as AI even if you use em-dashes for whatever reason. It’s not just the dashes.

-

@Pavel said in AI Megathread:

I’ve started using semicolons more in my notes:

Until some article points out that semicolons also occur more often in AI-generated work than in the average (non-professional) writing, and you’re right back where you’ve started. I’m honestly surprised it isn’t mentioned in the wikipedia article, since I’ve seen it highlighted elsewhere.

@Trashcan said in AI Megathread:

These tools are aware of the negative ramifications of a false positive and are biased towards not returning them.

And yet they still do, and not necessarily at the 1% false-positive rate they claim. For example, from the Univ of San Diego Legal Research Center:

Recent studies also indicate that neurodivergent students (autism, ADHD, dyslexia, etc…) and students for whom English is a second language are flagged by AI detection tools at higher rates than native English speakers due to reliance on repeated phrases, terms, and words.

This has been widely reported elsewhere too. It’s a real concern and it has real-world implications on peoples’ lives when they are falsely accused of cheating/etc.

-

@Faraday said in AI Megathread:

For example, from the Univ of San Diego Legal Research Center:

If we’re getting down to the level of sample size and methodology, it’s probably worth mentioning that this study looked at 88 essays and ‘recent’ in this context was May 2023, or 6 months after the release of ChatGPT. It is safe to assume the technology has progressed.

-

@Trashcan It is good to examine the robustness of the particular studies referenced in that article (some of which were from 2023, not 2024, though), but I’ve seen no evidence that the tech on the whole has gotten any better in this particular regard.

-

@Faraday ok but 88 essays is not a sample size that anyone can take seriously.

-

@hellfrog I’m not really sure what study you’re talking about that specifically had the 88 essays. The Univ of San Diego site I linked to had a whole bunch of studies referenced, and I cited their overall conclusions. I am also drawing from reporting I’ve read in other media sources, but which I don’t have immediately handy.

-

@Faraday said in AI Megathread:

Until some article points out that semicolons also occur more often in AI-generated work than in the average (non-professional) writing, and you’re right back where you’ve started.

I don’t like this game anymore.

-

I’ve got this AI detector thing and I hate it with the hatey black hate sauce.

No, you stupid thing, 100% of this student’s paper isn’t likely to be AI, I’ve watched him building this argument for twelve weeks.

Say, what, this one’s paper is also likely all AI? Who the heck tells AI to do APA formating so creatively wrongly?

Yeah, right, this is so likely all AI, the student fed the assignment into AI along with the instructions, “Write this in the style of someone who doesn’t know how to write an academic paper trying to write an academic paper.”

I really hope other instructors are not taking this daft thing seriously.

-

@Gashlycrumb

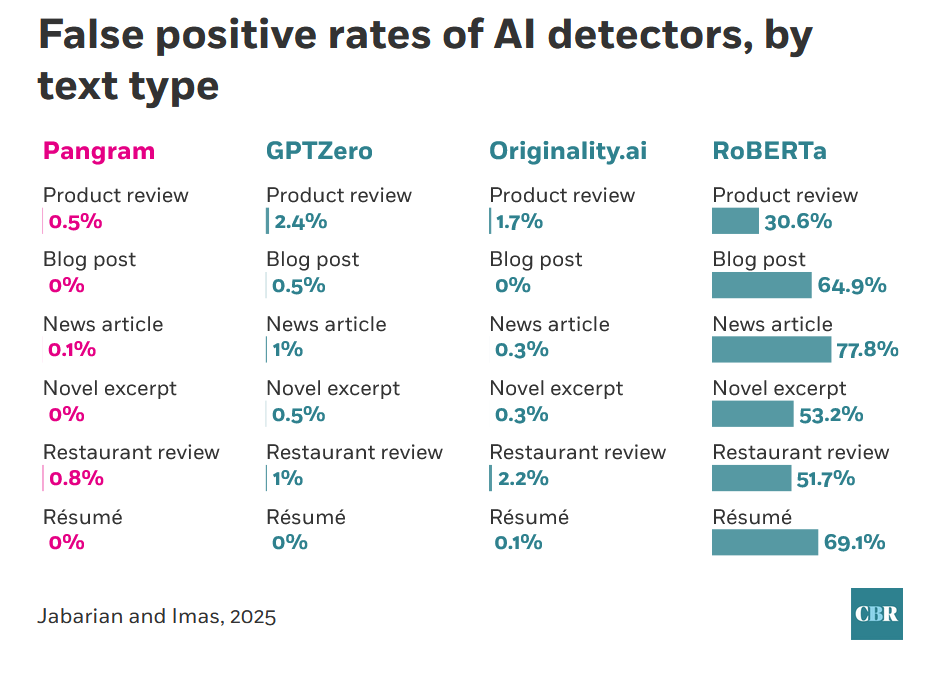

That does sound frustrating, and while I’ve been in defense of the odds of AI detectors not falsely accusing people throughout this thread, it’s still worth noting that even recent studies find wide disparities between product offerings. From one of the studies already linked:

Clearly RoBERTa, the open-source offering, is not something anyone should be using. I hope there’s some sort of feedback mechanism to the administration that the particular tool they’ve selected is highly unsuited to the task.